Brain Computer Interface: How To Get Started, Applications, Challenges And More

Learn how human brain works, what is brain computer interface, its applications, how to write program to detect when a user blinked his eye and more

Table of contents

- Brain Computer Interface (BCI) 😎

- How Human Brain Works 🧠

- How Brain Computer Interface Works 🤖

- Achievements Using Brain Computer Interface 🏆

- Future Ideas Of Brain Computer Interface 🔥

- Challenges In Brain Computer Interface 🤐

- Practical Implementation Of BCI Technology 🖥

- Making Your Own BCI Programs ⌨

- Some Cool Projects You Can Make 🔥

- Important Note ‼

- Thanks

Brain Computer Interface (BCI) is a technology that makes use of our brain's electrical signals to communicate with a computer and do something meaningful. It is a magical technology that can help blind people see, handicapped people walk, and deaf people hear! Theoretically, this technology can also be used to create powerful authentication systems, cure addictions, record dreams, and do a lot of other stuff!

In this article, I will give you an overview about Brain Computer Interface, how it works, how body movements and thought based instructions are detected, some practical applications , futuristic ideas, challenges and a lot of other exciting stuff

I will also tell you how you can create a javascript program to detect when a user blinks his eyes or laughs at something using Brain Computer Interface :)

Brain Computer Interface (BCI) 😎

It is a technology that lets a human brain interact with a computer. When you click on notepad icon to open notepad on your computer, it is known as Graphic User Interface (GUI) as you are making use of graphical icons to interact with a computer

Imagine you simply start thinking of opening the notepad on your computer and it opens automatically. This is known as Brain Computer Interface as you are using your brain to interact with a computer

How Human Brain Works 🧠

To understand BCI, you need to have a basic understanding of how our brain works. Human brain is indeed the most complex organ in our body and scientists have still not been able to completely understand how our brain works. But they do know which part of brain is responsible for what purpose. For example

- Movement of our hands and legs is controlled by Cerebellum

- All our past memories are handled by Hippocampus

- Emotions like happiness, joy and fear are controlled by Amygdala

- Our creativity and imagination power is controlled by Frontal Cortex

- Things which we see through our eyes are processed by Occipital Lobe

- Things which we hear using our ears are processed by Temporal Lobe

- Taste of food is processed by the Insular Cortex

- Automatic movements in our body such as beating of heart is controlled by the Brain Stem

Our brain has billions of cells called neurons. When you touch a hot object, the neurons in your fingers sense the high temperature and immediately tell the brain about this by releasing some chemicals known as neurotransmitters. As a result, the brain instructs the neurons to remove the hand immediately from the hot object. This communication between hand and brain is controlled by neurons

As the neurons exchange chemicals in between them, there is some movement of free ions, and as a result, some electrical signal is also generated. And we can detect that signal using a device called EEG machine, convert that signal into digital format and do something meaningful out of it such as lighting a bulb or moving a motor!

How Brain Computer Interface Works 🤖

There is always an electrical activity going on in our brain even when we are asleep. To detect those signals, we use a device called electrode. It is a tiny metallic disc connected to long wires. Using an electrode, we can detect the electrical signals released by our brain and transfer them to a computer to process them further

If the electrodes are placed over the scalp, the procedure is known as Electroencephalography and if the electrodes are implanted inside the brain, the procedure is known as Electrocorticography

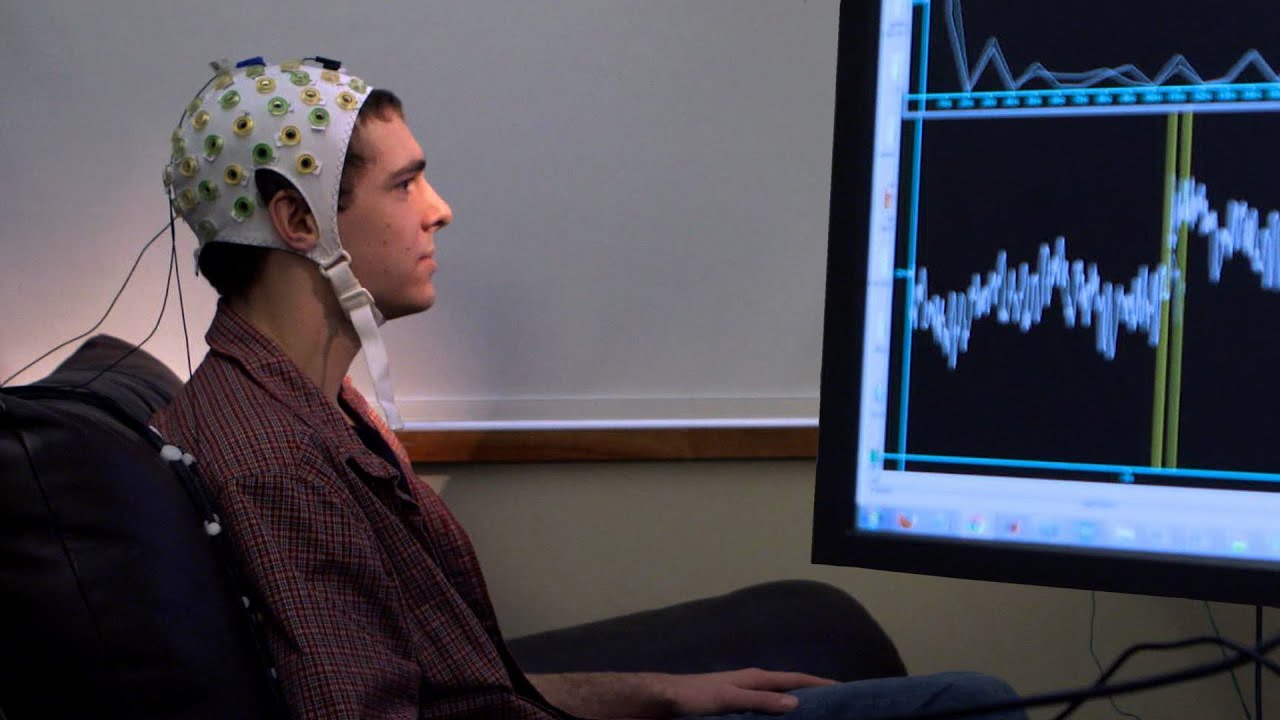

BCI devices contain electrodes that can be placed over the scalp or implanted inside the brain to receive the signals. Once the signals are received, they are further amplified and converted into digital format using signal processing algorithms. Finally, one can make use of programming languages like C to do something meaningful out of those signals!

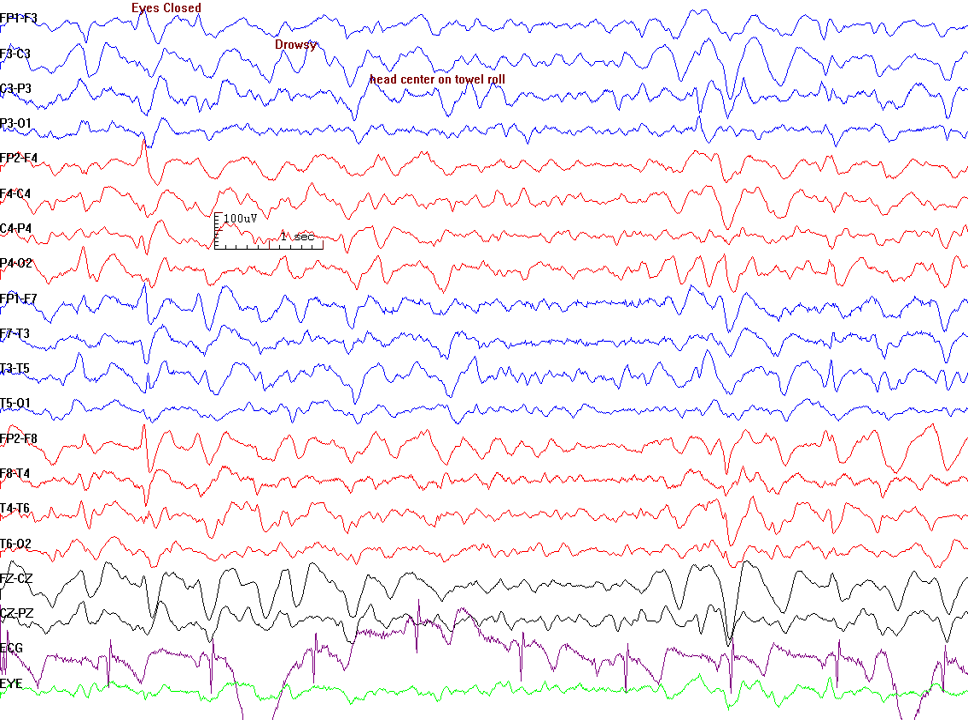

This is how the signals in our brain look like!

Remember the occipital and temporal lobes we discussed previously? A lobe is just a part or a region in brain which performs any specific task. There are 6 lobes in a human brain which perform different tasks bodily tasks such as movement of limbs and and processing of senses (vision, voice, taste etc)

A BCI device can give electrical signals present in all these lobes (F is frontal, Fp is frontopolar, T is temporal, O is occipital etc)

Understanding these signal is indeed difficult but many BCI softwares automatically parse these signals for you

Achievements Using Brain Computer Interface 🏆

Here are some of the achievements scientists have made in the field of brain computer interface specially related to the healthcare domain

Restoring Vision 👁

In 1978, scientists implanted a BCI device with 64 electrodes inside the brain's visual cortex of a person named Jerry who suffered from total blindness. As a result, that person was able to sense the presence of light and shades of grey colours around him. Unfortunately, this procedure required that person to stay connected to a mainframe computer and also came with risks of scar formation or infections

In 2002, another person named Jens Naumann, who suffered from blindness, got Dobelle's second generation implant. This device was much better than 1978's BCI device and immediately after the implant, Jens reported that he was able to see some grade of colours around him. Although his vision was still of low quality (tunnel vision / starry night effect), he was still able to drive an automobile. Unfortunately, the device stopped working eventually and Jens was again visually impared

Today, there are dozens of companies that are working on development of BCI devices that can help people achieve even better sense of colours around them

Restoring Movement 👟

In 2001, a guy named Matt Nagle got paralysed from the neck down due to spinal cord injury. In 2005, he received Cyberkinetics's BrainGate chip-implant. This was a 96 electrode BCI device which was implanted inside the brain's area responsible for movement of arms. Immediately after the implant, Matt was able to control a robotic arm by thinking about moving his arm!

Machine Control 🎮

There have been many experiments where people suffering from paralysis were able to control a computer mouse and play video games using BCI implants just by thinking about the activity

Future Ideas Of Brain Computer Interface 🔥

Here are some of the innovations that may be achieved in future by using the power of brain computer interface

- Transferring of thoughts from one person's brain to another (Telepathy)

- Reading a person's thoughts on a computer screen (Mind Reading)

- Recording a person's dreams in computer memory

- Downloading a person's memory inside a computer

These ideas may sound fictional however, theoretically they are very much possible and many scientists are researching on how to practically implement these ideas in real world! It is estimated that by 2030, the market capital of BCI based devices will be around $5 Billion (It is around $1.4 Billion as of 2022)

Challenges In Brain Computer Interface 🤐

The field of BCI is still in a research phase. There are many innovative ideas related to it however due to technical or ethical issues, converting those ideas into a reality is hard. Some of the common challenges in BCI are:

- Electrical signals lose their strength when travelling from brain to scalp. As a result, it is difficult to make efficient use of BCI device by placing it over the scalp

- Surgically implanting the BCI device inside brain has many risks such as infections, seizures or internal bleeding. Scar formation in the brain may also cause some disability and signal loss

- BCI device may fail to work properly and in this case, another surgery would be needed to replace the device

- Implanting of BCI devices in brain may cause permanent changes in a person's personality

- BCI devices are highly expensive and so is the cost of surgery required for the implantation

Practical Implementation Of BCI Technology 🖥

Let us learn how we can make our own BCI based projects. To detect the brain signals for so, you need an EEG machine. Although most EEG machines are costly, luckily there are many companies that provide affordable wearable EEG machines for hobby BCI projects! Some of them are NeuroSky and Emotiv. You can check their websites to know more about these products

In this article, I will tell you how you can make use of Emotiv's BCI devices to write your own BCI programs!

About Emotiv BCI Devices 🧠

Emotiv is an american company which develops wearable electroencephalography (EEG) devices in form of wearable neuroheadsets. These devices are often used by hobbyists to develop useful brain computer interface based applications. There are 3 devices provided by Emotiv which are:

Emotiv's EPOC has 14 sensors or electrodes that can be placed over the scalp and therefore provide you a lot of details about your brain. Unfortunately the Emotiv's software is not open source so you don't get the raw data of each sensors. What you get is direct information about brain such as

- Expressions (Smile, Laugh, Blink etc)

- Mood (Relaxed, Stressed, Focused etc)

- Thoughts / Cognitive Actions (push, pull, rotate, drop etc)

You can use this information to do something meaningful. For example, when a user thinks of the command "push", you can detect it using Emotiv's EPOC/ EPOC-X and run the code to rotate the motor of a robotic arm!

Making Your Own BCI Programs ⌨

Now comes the fun part! Here I will be telling you how you can write your own BCI programs using Emotiv's EPOC to perform some cool stuff

There are many ways to do this. Either you can use Emotiv's software to do this, or you can make use of the javascript language. In this article, I will make use of epoc.js which is a javascript based framework to interact with Emotiv's EPOC device

Using this, you can get all the brain data such as facial expressions, body movements and thought commands!

To get started, install the epocjs framework by referring to this guide and then you are good to go!

Program To Detect When A User Blinks His Eye

var Epoc = require('epocjs')();

Epoc.connectToLiveData("<path to your profile file>", function(event){

if(event.blink > 0){

console.log('Blinking')

}

})

- In this code, first we initialize the Epocjs framework by creating its object and storing it in a variable

- Once you start the EPOC device and record your brain data, it will get stored in a file. We pass that file's location path in Epoc variable's connectToLiveData

- Once the file is processed by the framework, we get an "event" variable through the callback we passed in connectToLiveData() function. That event variable contains meaningful details about our brain

- Each detail has some value. 0 means false and more than it means true. Which means if event.blink is more than 0, it means the user is blinking and therefore you will see a log message "Blinking" on the screen as the user blinks

Lets see some other examples using Epocjs framework

Program To Detect When A User Smiles

var Epoc = require('epocjs')();

Epoc.connectToLiveData("<path to your profile file>", function(event){

if(event.smile > 0){

console.log('Smiling')

}

})

Program To Detect When A User Looks Around

var Epoc = require('epocjs')();

Epoc.connectToLiveData("<path to your profile file>", function(event){

if(event.gyroX){

console.log("Looking Up Or Down")

}

if(event.gyroX){

console.log("Looking Left Or Right")

}

})

Program To Detect Thought Based Commands

This program detects when a user thinks for some of the specified thoughts listed in this program

var Epoc = require('epocjs')();

Epoc.connectToLiveData("<path to your profile file>", function(event){

if(event.cognitivAction > 0 && event.cognitivActionPower > 0){

switch(event.cognitivAction){

case 2:

console.log('push')

break;

case 4:

console.log('pull')

break;

case 8:

console.log('lift')

break;

case 16:

console.log('drop')

break;

case 32:

console.log('left')

break;

case 64:

console.log('right')

break;

case 128:

console.log('rotate left')

break;

case 256:

console.log('rotate right')

break;

case 512:

console.log('rotate clockwise')

break;

case 1024:

console.log('rotate counter clockwise')

break;

case 2048:

console.log('rotate forwards')

break;

case 4096:

console.log('rotate reverse')

break;

case 8192:

console.log('disappear')

break;

}

}

})

These case 2,4,8,16 etc are predefined codes or numbers having a special meaning. For example, code 1024 means your brain instructed to rotate counter clockwise and you can detect these thoughts or instructions using epocjs and perform some task out of it :)

If you wonder how this device can recognize the thoughts, it contains a pre trained model that can detect presence of these thoughts in the signals received. If you want to get control over some more thoughts, you need to create your own model for that. For this, you can use Emotiv's Cortex API which supports different languages like C++, C#, Javascript, Python etc

Some Cool Projects You Can Make 🔥

Here are some of the projects you can try making by using Emotiv's EPOC device

- A virtual keyboard and mouse that can be controlled by a person's thoughts

- A robotic arm by using Arduino, Servo Motors and Emotiv's EPOC device to make an arm which can lift small objects by using a person's thoughts

- A program where a person will be able to turn on the lights and fans of his room just by thinking about it. You can use tools like Arduino and IoT modules for this

Important Note ‼

BCI Devices manufactured by Emotiv and NeuroSky are fine for playing with brain computer interface. However they only give some basic details about our brain. To get more detailed information about brain, you need a BCI device with more electrodes and possibly an implant to get more stronger and clearer signals. Also, sometimes such devices may take some time to process your thoughts and execute your code which may be annoying

Thanks

I would like to thank Hashnode for providing such an amazing platform to share your thoughts and ideas. I hope this article will help you get a some basic understanding about Brain Computer Interface. If you have any questions, let me know in the comment section or send me a message on my instagram or linkedin :)